PostgreSQL query optimizer: Function inlining That’s why you should try to make sure that the filter is on the right side, and not on the column you might want to index. What we see here is that PostgreSQL does not transform the expression to “x = 8” in this case. Why is that important? In case “x” is indexed (assuming it is a table), we can easily look up 8 in the index.įunction Scan on generate_series x (cost=0.00.0.15 rows=1 width=4) What the system does is to “fold” the constant and instead do “x = 8”. What you can see here is that we add a filter to the query: x = 7 + 1. Let’s see what happens during this process:įunction Scan on generate_series x (cost=0.00.0.13 rows=1 width=4) PostgreSQL constant foldingĬonstant folding is one of the more simplistic and easier things to describe. There is a lot more going on, but it makes sense to take a look at the most basic things in order to gain a good understanding of the process. Note that the techniques listed here are in no way complete.

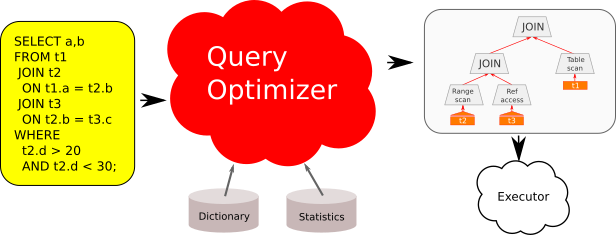

So let’s take a tour through the PostgreSQL optimizer and get an overview of some of the most important techniques the optimizer uses to speed up queries.

For many people, the workings of the optimizer itself remain a mystery, so we have decided to give users some insight into what is really going on behind the scenes. Just like any advanced relational database, PostgreSQL uses a cost-based query optimizer that tries to turn your SQL queries into something efficient that executes in as little time as possible. Let us know if you have further queries.Anti-join exists from_collapse_limit optimizer performance postgresql query volatile This problem can be resolved with a better filter condition. The most common root cause for long running queries is scans, meaning the query was unable to apply the indexes. QueryMetrics provides details on how long each component of the query took to execute. IReadOnlyDictionary metrics = result.QueryMetrics Returns metrics by partition key range Id "SELECT * FROM c WHERE c.city = 'Seattle'",įeedResponse result = await query.ExecuteNextAsync() UriFactory.CreateDocumentCollectionUri(DatabaseName, CollectionName), IDocumentQuery query = client.CreateDocumentQuery( In the API for NoSQL SDKs, Azure Cosmos DB provides query execution statistics. Learn more on the query performance guide. From the query metrics, you can see how much of it's being spent on the back-end vs the client. The query metrics will help determine where the query is spending most of the time. All newly ingested logs have the full-text or PIICommand text for each request. This setting is applied within a few minutes. You also give permission for Azure Cosmos DB to access and surface this data in your logs. By enabling full-text query, you're able to view the deobfuscated query for all requests within your Azure Cosmos DB account. It is recommended to disable this feature after troubleshooting.Īzure Cosmos DB provides advanced logging for detailed troubleshooting. Use Enable full-text query for logging query text:Įnabling this feature may result in additional logging costs, for pricing details visit Azure Monitor pricing. Welcome to Microsoft Q&A forum and thanks for using Azure Services.Īs I understand, you want to trace all queries being executed on Cosmos DB. In Azure Portal, is there a way to record every single query being sent to the database, for say 24hours, so that we can see if there are any "rogue" queries being executed, so we can then look at them and include them in the the indexing policy. My theory is that there are some other applications out there that we don't know about using this database and their queries have not been taken in to account for the indexing optimisation.

Now the index policy is in place, we are now experiencing pegged 100% RU consumption and high 429 error codes.

In fact, the whole reason for this effort was that one particular query was taking 100seconds to run, for 350 records and after the above exercise, this query now executes in under 1 second. The result was that every single one of them reported that it "Utilised Composite Index" with "Impact: High" which, to me, was the exact result I wanted. I then ran a simple script which executed all the queries with diagnostics enabled to see how the DB query optimizer would then handle those queries. We have a database with 10s of millions of records and we are running in to performance issues.Īfter analysing the applications using this database, we created a new indexing policy which EXCLUDES all fields by default, but then INCLUDES all properties that have been requested in the WHERE / ORDER BY queries used by these applications, including multiple composite indexes on the partition key field plus other key identifiers.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed